The Twilight Saga: A Mathematical Perspective

Living in Los Angeles, it's hard not to be aware of the fact that the new Twilight movie, Eclipse, arrives in theaters today. The series has developed an insatiable fan base of people willing to spend thousands of dollars to fly here in the hopes of scoring tickets to the premiere, which certainly indicates the film will be a success. But of course, the film's success was never in question: with the first two movies having grossed over $1 billion worldwide, the success of this latest entry in the franchise is a foregone conclusion.

Of course, the success of this franchise should not be viewed in isolation, but as just a part of the larger vampire pop culture renaissance. HBO's True Blood, also based on a book series involving a girl who knocks boots with the undead, is going strong into its third season this summer, and the CW's Vampire Diaries will return for a second season this fall. And just when I thought the market for vampire-themed programming had become saturated, ABC premiered its own summer show featuring blood suckers called The Gates. Clearly there is a trend here, with the ever-growing popularity of the vampire at its center. No doubt Eddie Murphy is rolling in his undead grave for not releasing Vampire in Brooklyn 10 years later.

While there are many words that could be used to describe these shows and movies that place supernatural love triangles at their center, "realistic" is not one of them. Nevertheless, there are a handful of people who have taken a critical eye to the vampire phenomenon and have used mathematical models to gain insight into how the populations of such creatures might behave in real life. Just like the fights between Team Edward and Team Jacob, however, the debate over whether vampires could actually exist rages on.

Not long ago, an article went around the web purporting that a team of physicists had proven that vampires could not exist. The physicists, Costas Efthimiou and Sohang Gandhi, posted a paper to the arXiv in which they purport to use physics to dispel pop culture portrayals of ghosts and zombies, in addition to vampires. Their argument for debunking vampires rests on the following assumptions:

- When a vampire bites a human, that human becomes a vampire (we will return to this assumption later).

- Vampires need to feed on a human once every month (a conservative estimate when compared to what popular culture would have us believe).

- Assume the first vampire came into existence in 1600, when the human population was roughly 500 million.

- Ignore human mortality rates due to other factors, and ignore human birth rates as well.

With these hypotheses, they show that vampires would wipe out humanity in just 2 1/2 years. In fact, no matter the size of the initial human population, their model will lead to humanity's extinction in a short amount of time. This is true even if we assume a more conservative estimate on the length of time vampires can go between feedings.

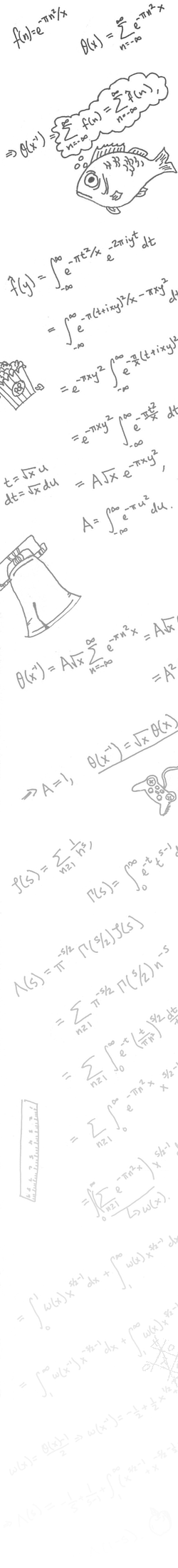

The reason is simple. Using their model, the first vampire will feed after one month, creating a new vampire (by assumption 1). After 2 months, those 2 vampires will each feed, giving us a total of 4 vampires. After 3 months, those 4 vampires will feed, giving us a total of 8 vampires. The pattern continues - after n months, the vampire population will be 2n. In other words, the population of vampires will grow exponentially. Moreover, because of the assumption on the birth and mortality rate of mankind, we see that as the population of vampires grows exponentially, so too must the population of humans shrink exponentially. This means that at some point (sooner than you might think), humans would be wiped out.

The careful reader, however, will note a number of problems with this analysis. For one, ignoring the birth rate of humans means that the model's date of extinction is premature. However, Efthimiou and Gandhi point out that even if we include the birth rate, that rate would not be high enough to counteract the explosion in the vampire population. A more serious flaw, however, is in not considering the mortality rate of the vampires themselves. After all, once people realize there are vampires in their midst, wouldn't they fight back, or at least defend themselves so that not all of the vampires could feed? Assuming that every vampire would be able to feed whenever necessary seems unrealistic.

What's more, assuming that vampires can only satisfy themselves with human blood, it seems unreasonable to assume that vampires would feast so carelessly, without regard to the diminishing supply of their food. If vampires killed all humans, they in turn would die (again), and so it seems reasonable to expect that vampires would apply a better strategy, one in which they kept the human species afloat so that they could themselves continue to exist. Just ask Ethan Hawke.

In a brief article for Math Horizons, mathematician Dino Sejdinovic addresses these issues and highlights an article from 1982 that modeled the vampire outbreak more realistically, by including the human birth rate and vampire mortality rate. In doing so, the mathematics becomes less fit for a general audience, but it also gives us a more interesting picture - regardless of the collective desire for human blood, vampires can act in a way that the ratio of vampires to humans reaches an eventual equilibrium. In other words, it doesn't seem right to throw out the idea of vampires based on purely mathematical arguments.

Interestingly, though, all of these analyses rest upon assumption (1), which states that humans always become vampires once bitten. In the modern incarnation of these creatures, however, this assumption no longer appears to be valid. For example, in both True Blood and The Vampire Diaries, the process of turning into a vampire requires consent (I guess it's more romantic that way); not only must the vampire drink the human's blood, but the human must also drink the vampire's blood. In this case, it is possible for vampires to satiate themselves without killing humans (provided the vampires can show enough restraint) or increasing their own population.

There also appear to be rules governing population control in vampire communities. For example, in an episode of True Blood, one vampire is tasked with creating a new vampire as penance for murdering one of his own kind. Are such rules keeping the population stable widespread? How might such rules, in conjunction with a weakening of assumption (1), alter the vampires' optimal strategy? I will leave it to the curious reader to discover the answer.

Psst ... did you know I have a brand new website full of interactive stories? You can check it out here!

comments powered by Disqus